Residential Proxies for SERP Tracking and Local SEO Monitoring in 2026

There was a time when ranking data was relatively universal. You ran a rank tracker, it pinged Google, and you got a number. Position seven. Done. That world is gone.

In 2026, Google and Bing don’t just show you a search result; they construct one specifically for you. AI-driven behavioral modeling, device fingerprinting, and what the industry now calls geofencing layers mean that a user in Austin, Texas, searching “best pizza near me” will see a completely different set of results than someone running the same query from a data center in Virginia, even if both are supposedly targeting Austin. The search engine knows the difference, and it adjusts accordingly.

For SEOs and agencies, this creates a dangerous blind spot. Standard rank trackers that rely on data center IP addresses are handed sanitized, often artificially “clean” SERPs, results that don’t reflect what a real user in that market is actually seeing. You’re optimizing for a reality that doesn’t exist.

This is why proxy residential networks have become foundational infrastructure for anyone serious about local SEO. Not a nice-to-have. Not a power-user trick. Table stakes.

This guide walks through exactly how residential proxies work, why they matter specifically for SERP tracking in 2026’s anti-scraping environment, and how to choose a provider that doesn’t quietly undermine everything you’re trying to measure.

What Is a Residential Proxy, Exactly?

The term gets thrown around a lot, so let’s be precise. What is a residential proxy? At its core, it’s an intermediary server that routes your traffic through an IP address assigned by a real Internet Service Provider (ISP) to an actual physical household device, a home router, a smartphone on a mobile data plan, or a laptop connected to a neighborhood ISP.

That last part is what matters. The IP isn’t living in an Amazon Web Services rack in Ohio. It belongs to a real person’s real connection, in a real city, probably down someone’s real street.

Residential vs. Data Center: Why Search Engines Care

Search engines have become extraordinarily good at profiling incoming requests. One of the first signals they check is whether an IP address belongs to a known commercial hosting range. Data center IPs, even expensive, “rotating” ones, are catalogued, scored, and treated with deep suspicion by Google’s bot-detection stack.

A proxy residential IP, by contrast, looks exactly like a household consumer browsing the web. It carries ISP-level metadata, arrives from a legitimate residential netblock, and behaves the way a real user’s traffic does. This is the fundamental trust gap between the two categories.

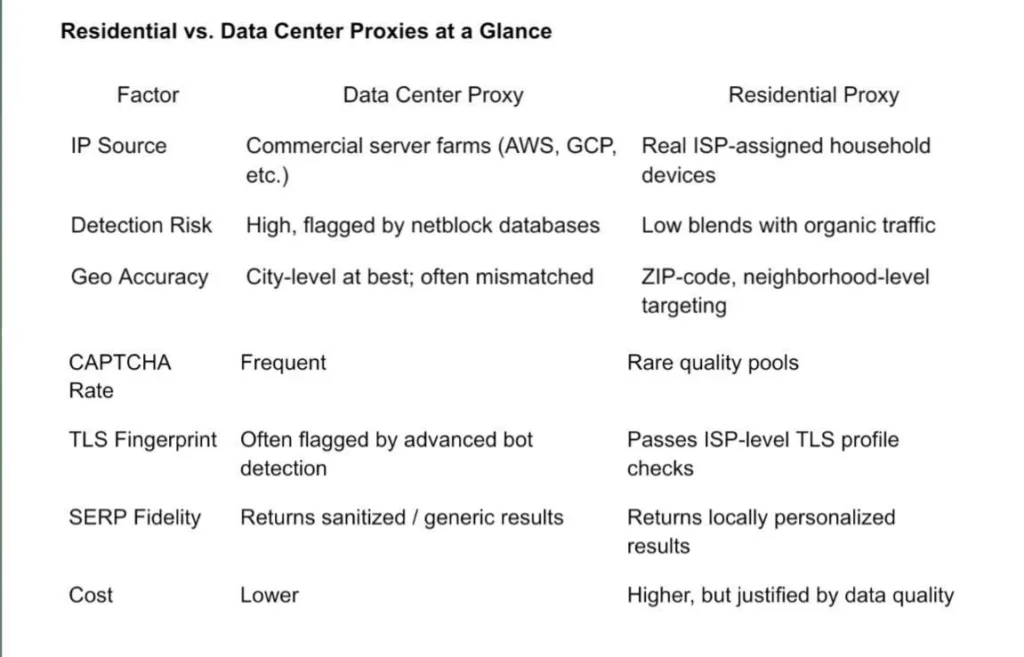

Residential vs. Data Center Proxies at a Glance

The table above captures the tradeoff plainly: data center proxies are cheaper, but you’re essentially paying to receive the wrong data faster. For local SEO monitoring, that’s not a bargain; it’s an expensive mistake.

Why SERP Tracking Requires Residential IPs in 2026

The “Near Me” Problem

If you manage SEO for a multi-location brand or a portfolio of local clients, you already know that “near me” queries and Google’s Local Pack, the map-based result cluster that appears above organic listings, are intensely geographic. We’re not talking city-level geographic. We’re talking block-by-block, geographic.

Two users standing four blocks apart and searching “urgent care near me” can see entirely different Map Packs. The rankings in that three-listing cluster can swing wildly depending on the device’s perceived physical location. A rank tracker querying from a data center in the same city isn’t modeling this. It’s returning a flattened, averaged result that exists nowhere in the real world.

A proxy residential network with genuine ZIP-code or city-block targeting gives you something a data center never can: the actual result that an actual customer standing at a specific intersection would see.

The 2026 Anti-Scraping Stack

Google’s bot-detection infrastructure in 2026 has moved well beyond simple IP reputation checks. The current defense stack layers multiple signals:

- Advanced TLS fingerprinting, analyzing the SSL/TLS handshake to identify non-browser client libraries, even when disguising user agents.

- Behavioral cadence analysis, detecting query patterns that don’t match human browsing rhythms (too fast, too regular, too unvaried).

- IP reputation scoring, cross-referencing IPs against ASN registries to flag commercial hosting ranges instantly.

- Geofencing consistency checks, validating that a claimed geographic location matches the IP’s registered ISP location at a granular level.

High-quality residential IPs with proper IP rotation and session management pass these checks because they are what they claim to be: household connections. There’s no spoofing involved. The IP genuinely belongs to an ISP customer in the target city, which means the geofencing consistency check resolves cleanly.

Pairing residential IPs with anti-detect browsers, tools that also normalize TLS fingerprints and browser canvas signatures, takes this a step further and is increasingly standard practice among professional SEO teams.

Maximizing Local SEO Monitoring Efficiency

The UULE Parameter + Proxy Pairing

Here’s a technique that separates sophisticated local SEO setups from amateur ones. Google’s UULE parameter allows you to encode a geographic coordinate directly into a search URL, telling Google’s servers to return results as if the query originated from that exact location. On its own, it’s useful. Paired with a matching residential IP from the same region, it’s remarkably powerful.

The combination works because it creates consistency across both the network layer (the residential IP saying “I’m in downtown Chicago”) and the request layer (the UULE string confirming “return results for this specific Chicago coordinate”). Mismatched signals, a UULE for Chicago combined with an IP from a Virginia data center, trigger exactly the skepticism you’re trying to avoid.

When this request is routed through a residential IP from a Chicago ISP, the UULE coordinate and the IP’s registered location are aligned, and you get back the genuine Local Pack a Chicago user would see.

Competitor Intelligence at the Local Level

Residential proxies don’t just improve the accuracy of your own rank tracking; they open up a whole layer of competitor intelligence that data center setups can’t access reliably. Localized paid ads, featured snippets, and “People Also Ask” boxes are all highly geographically sensitive. With the right proxy setup, you can see the exact ad copy a competitor is running in Phoenix, the featured snippet they’re winning in Miami, and the local-pack position they’ve locked down in three ZIP codes in Denver, all from a single monitoring workflow.

Sticky sessions matter here. For competitor ad monitoring in particular, you want to hold the same IP across a sequence of queries to simulate a real browsing session rather than triggering per-query IP rotation, which can look unnatural and surface inconsistent ad targeting.

Scaling Without Triggering Cooldowns

Agencies tracking thousands of keywords across dozens of locations face a specific infrastructure challenge: Google’s rate-limiting behavior. Even with residential IPs, hammering the same IP with high-frequency queries will eventually trigger a cooldown or soft block. The solution is pool size. A large, diverse residential pool lets you distribute query volume across thousands of different IPs, keeping the per-IP request rate well within what looks like normal human browsing, and keeping your tracking infrastructure consistently unthrottled.

Choosing the Best Residential Proxy for SEO in 2026

The market for residential proxy services has matured, but quality still varies significantly. When evaluating providers, the criteria below are non-negotiable for anyone doing serious local SERP work.

What to Look For:

- Pool size and diversity: Access to millions of IPs across 150+ countries, with genuine city-level and ZIP-code targeting, not just country-level flags slapped on server IPs.

- Success rate on search engine endpoints: Look for providers who can demonstrate 99%+ success rates specifically on Google and Bing. Generic success rates are misleading; search engines are the hardest target.

- Ethical IP sourcing: IPs should be sourced through transparent, consent-based networks. Ethically sourced pools also tend to have cleaner reputations, and users opt in, which means the IPs behave like real users because they effectively are real users.

- ISP-level targeting: The ability to route through specific ISPs (Comcast, AT&T, Verizon, etc.) adds another layer of authenticity that matters for hyper-local accuracy.

- Flexible session control: Both rotating sessions (for broad keyword sweeps) and sticky sessions (for competitor ad monitoring or multi-step workflows) should be available.

For agencies looking for a balance between elite performance and cost-efficiency, providers like 9Proxy have become a go-to choice. Their clean IP pool and robust infrastructure allow SEOs to bypass even the most aggressive bot-detection systems, making them a top contender when you need to buy residential proxy bandwidth that actually delivers local results.

The Budget Factor: Why the Cheap Residential Proxy Math Doesn’t Add Up

Price is always part of the conversation, and there are genuinely good value options in the residential proxy market. But there’s a pattern worth understanding before you optimize purely for cost.

A cheap residential proxy offering, and there are plenty of them, typically priced at a fraction of established providers, tends to source its IPs from pools with poor hygiene. Either the IPs were used heavily by previous customers and are already flagged by Google’s reputation systems, or they were sourced through opaque means that result in unpredictable behavior. Either way, the practical outcome is the same: a higher rate of blocked requests, more CAPTCHA, and data that requires manual validation before you can trust it.

The hidden cost of a flagged residential IP isn’t just the failed request; it’s the compounding effect of building an SEO strategy on data you can’t verify.

The Clean IP ROI Calculation

Here’s a more useful way to frame the cost question. Suppose a budget provider costs $3/GB and a premium provider costs $8/GB. On the surface, the budget option looks like a 62% savings. But if the budget pool delivers a 70% success rate on Google, meaning 30% of your requests come back with a CAPTCHA, a block, or a distorted result, you’re effectively paying for data you can’t use. The real cost per useful data point on the budget provider may actually be higher than the premium option delivering 99% clean results.

Add in the cost of CAPTCHA-solving services, the engineering time to filter bad results, and the risk of making optimization decisions based on inaccurate SERP data, and the economics of quality residential infrastructure become clear pretty quickly.

Future-Proofing Your Local SEO Strategy

The through-line of everything in this guide is the same: local SEO in 2026 is fundamentally a data quality problem. The strategies, the content, the link building, all of it depends on your ability to accurately measure what’s actually happening in the SERPs your target users see. And that measurement problem, given how search engines now operate, is essentially unsolvable without high-quality residential proxies.

This isn’t a niche tool for power users anymore. Any agency tracking local rankings for clients, any in-house SEO team managing multi-location visibility, any brand that depends on local search traffic, they’re all operating with distorted data if they’re not using a proper proxy residential setup. That gap between what data center IPs see and what real users see is only going to widen as search personalization and geofencing become more sophisticated.

One practical parting note: whatever provider you choose and however sophisticated your setup becomes, always start new monitoring workflows with a small test batch. Run 50 to 100 keywords through the proxy before scaling to thousands. Validate that the geo-targeting is matching your expectations, that the UULE pairing is producing consistent results, and that your success rate is meeting the threshold you need. Catching configuration issues at a small scale is painless. Discovering them after a month of data collection is expensive.

The infrastructure exists to do this right. The only remaining question is whether the data you’re working from actually reflects the world your customers are searching in.